We explore the web for you!

Automated crawling of websites and web pages

Web Crawler is a set of software tools that Synaptica provides automated web scan extremely flexible and configurable.

Using Web Crawler you can set up and run campaigns to massive exploration of websites and web pages by extracting and interpreting the content and data of interest to structure them in fully customizable reports.

Web Crawler can interact with web pages by filling out forms and entering data based on differentiated profiles and record the outcome of complex processes and wizard such as those characteristic of preventivator online.

High-trust and results can be achieved with maximum safety thanks to an accurate monitoring and verification system of the output data. The suite is based on the use of a proprietary software (imacros) made up of these modules:

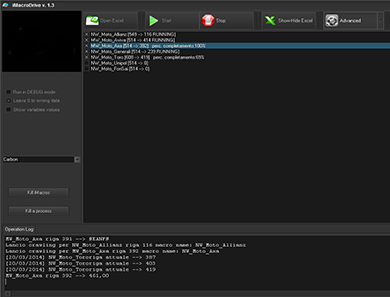

| iMacroDriver

Is a Synaptica software that allows to start in parallel more iMacros instance to unique input and output and minimize the extraction processes also of massive data sets by increasing the degree of parallelism. iMacroDriver captures the result of the draw and build in real time a data sheet (for example excel) with the results of crawling. iMacroDriver is also able to perform consistency checks and consistency of output data, notify errors up to allow you to take screenshots of the pages you visit, the data and the results of procedures.

Captcha Server/Client

With the increasing presence of mechanisms for safeguarding the forms in web pages using captcha for this type of application it is essential to make use of services of decoding the captcha in real time. There are many but it is often necessary to use more than one at the same time and also so it is not always guaranteed the correct decoding and compiling the captcha. Captcha Server is able to interface with multiple parallel decoding provider, also allows through a simple client application available for Windows and Android to get the captcha and manually manage the decoding of those most difficult and not decoded by the providers. |

|